Google just released Nano Banana 2, internally codenamed Gemini 3.1 Flash Image, and the AI image generation landscape shifted overnight. This is not a minor upgrade. Nano Banana 2 combines the advanced capabilities of Nano Banana Pro with the lightning-fast generation speed of Gemini Flash, delivering studio-quality images in a fraction of the time. The model is now rolling out across Google products and is available today on inference.sh as a serverless skill.

What makes this release significant is the combination of features that were previously exclusive to the slower Pro model. Advanced world knowledge, precise text rendering, subject consistency across multiple images, and production-ready resolution options - all running at Flash speed. For anyone building applications that need high-quality image generation without the latency penalty, this changes the calculus.

This post breaks down what Nano Banana 2 actually delivers, why the results look so much better than its predecessor, and how you can integrate it into your agent workflows today.

What Nano Banana 2 Actually Is

Nano Banana 2 is Google DeepMind's latest image generation model, officially named Gemini 3.1 Flash Image. It launched on February 26, 2026, and represents a genuine convergence of two previously separate product lines. The original Nano Banana became a viral sensation in August 2025, redefining what people expected from AI image editing. Then Nano Banana Pro arrived in November 2025, offering advanced intelligence and studio-quality creative control at the cost of slower generation times.

Nano Banana 2 eliminates that tradeoff. You get Pro-level capabilities running on Flash architecture, which means rapid iteration without sacrificing output quality. The practical result is images that match what Nano Banana Pro produces but generate fast enough for real-time editing workflows.

The model accepts up to 14 input images alongside text prompts, making complex editing and composition workflows possible in a single generation call. It outputs images from 512 pixels up to native 4K resolution, and supports aspect ratios from 1:1 to 16:9 and beyond. Text rendering - a notorious weak point for image generators - has been significantly improved, with legible output even for marketing copy and greeting cards.

Why the Results Look Different

Nano Banana 2 builds on previous versions with what Google describes as enhanced world knowledge integration, bringing real-time search grounding to image generation.

Advanced world knowledge is the most significant improvement. The model pulls from Google's knowledge base and can ground generation in real-time web search results. Ask for the Museum Clos Luce in a specific art style and it knows what that museum actually looks like. Request an infographic about cloud types and it understands the visual and factual difference between cumulus, stratus, and cirrus formations. This grounding in reality makes the outputs genuinely useful for educational content, reference materials, and accurate visualizations.

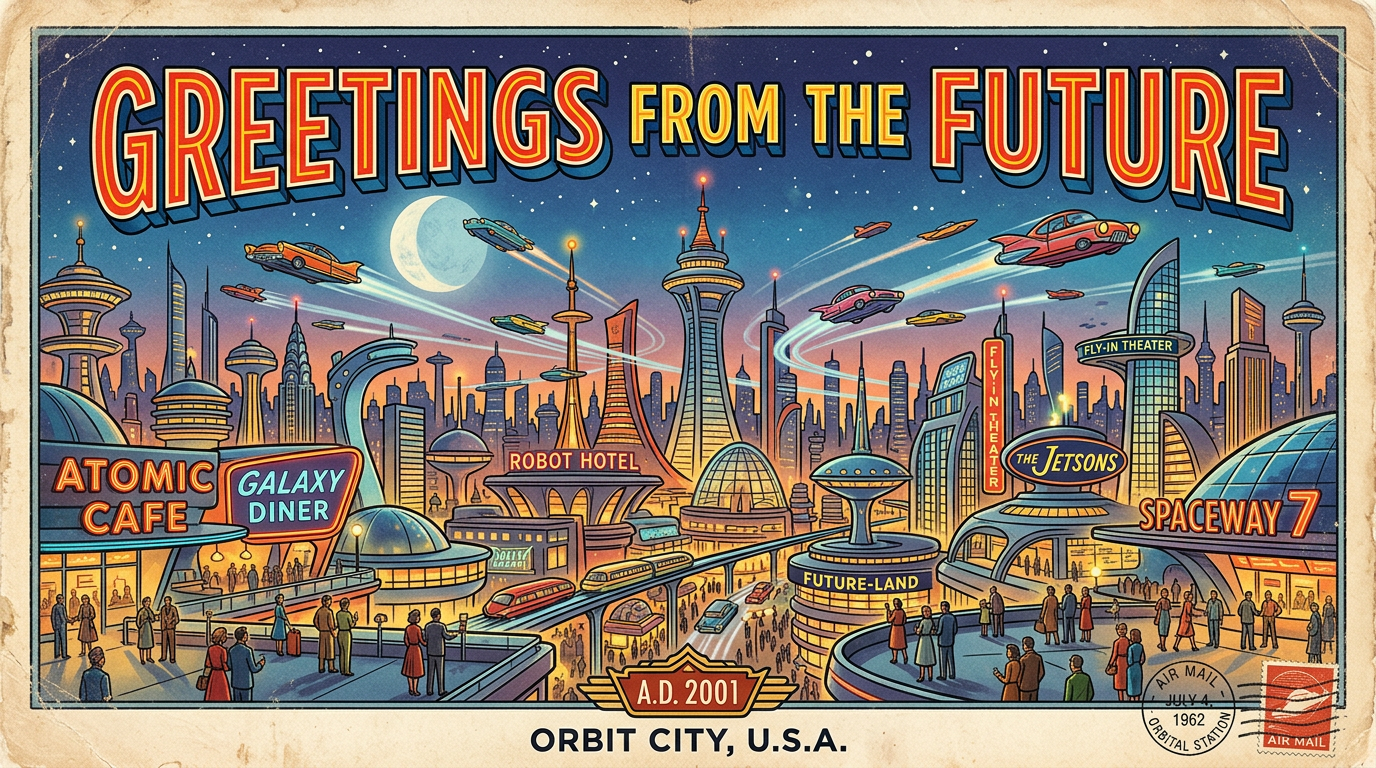

Precision text rendering solves one of the hardest problems in AI image generation. Previous models would produce garbled or illegible text more often than not. Nano Banana 2 generates accurate, readable text that you can actually use in marketing mockups, product labels, and signage. The model also handles text translation - generate an image with English text, then regenerate with Hindi, Spanish, or dozens of other languages while keeping the visual style consistent.

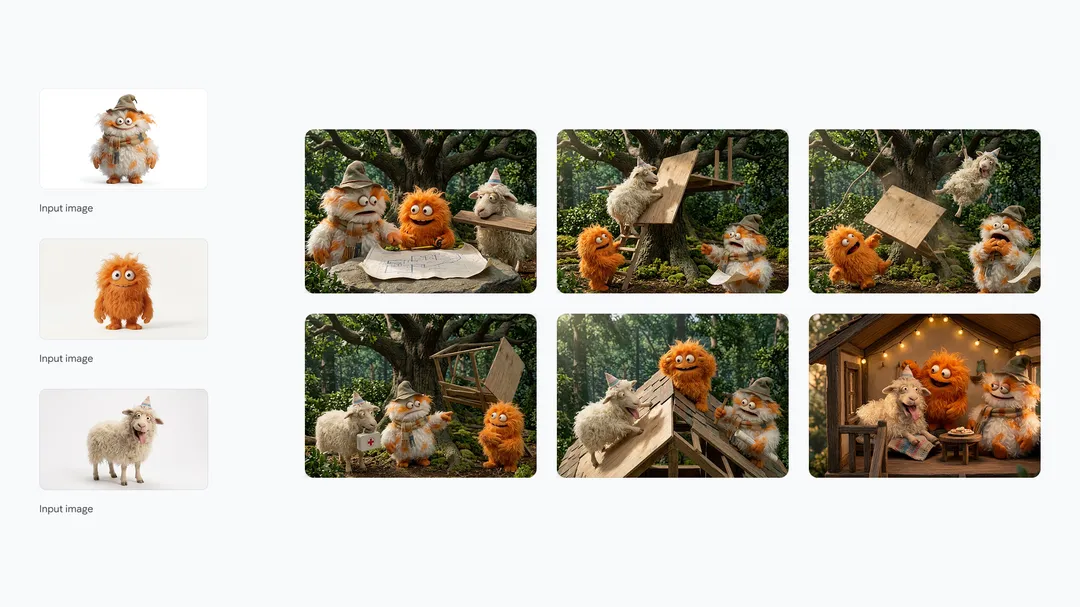

Subject consistency allows you to maintain character resemblance across multiple images. Upload up to five character references and fourteen objects total, and the model keeps them visually consistent across different scenes, angles, and lighting conditions. This makes storyboarding, comic creation, and brand asset generation practical without the painful manual work of maintaining character sheets.

Enhanced Creative Control

Beyond accuracy improvements, Nano Banana 2 dramatically expands what creators can control. The gap between professional design tools and AI generation has been narrowing, and this release closes it further.

Precise instruction following means the model adheres more strictly to complex requests. Earlier versions would often interpret prompts loosely, producing images that captured the general idea but missed specific details. Nano Banana 2 follows nuanced instructions more faithfully - if you specify a particular camera angle, lighting setup, and color palette, the output respects all of them rather than picking and choosing.

Production-ready specifications cover the practical requirements of real creative work. Full control over aspect ratios from square social posts to widescreen backdrops. Resolution scaling from 512 pixels for previews up to 4K for final assets. Multiple output formats. These options mean you can generate assets that actually fit into professional pipelines without post-processing.

Visual fidelity upgrades round out the improvements. Vibrant lighting, richer textures, and sharper details come through even at Flash generation speeds. The aesthetic quality matches what Nano Banana Pro delivered, but you get results in seconds rather than waiting through a longer generation queue.

The multi-image input capability deserves special attention. You can feed the model up to fourteen reference images and it will extract and combine visual elements from all of them. This enables workflows like taking a product photo, a background reference, a lighting example, and a style guide image, then generating a complete composite in one call. No layers. No manual compositing. No separate passes for different elements.

Google Search Grounding

One feature that sets Nano Banana 2 apart from other image generators is its integration with Google Search. When enabled, the model can pull current information and reference images from the web to inform its generations. This is not just about accuracy - it fundamentally changes what the model can do.

Ask for "the current CEO of OpenAI" and the model can look that up rather than guessing from training data that may be outdated. Request "the new iPhone design" and it can reference actual images rather than hallucinating features. Generate "a diagram of the latest Mars rover" and it will attempt to match the real design rather than producing a generic rover concept.

For information-dense content like infographics, educational materials, and reference visualizations, this grounding capability transforms the model from a creative tool into something closer to a research assistant that can also draw. The combination of world knowledge from training data plus real-time search results produces outputs that are both visually compelling and factually grounded.

This search grounding is optional and can be toggled per request. For pure creative work where you want the model to imagine rather than reference reality, you can disable it. For anything that needs to reflect the real world accurately, turning it on adds a layer of verification that other image models simply do not have.

Using Nano Banana 2 on inference.sh

We have made Nano Banana 2 available as a serverless skill on inference.sh. You can call it through the same interface you already use for other AI workloads - no GPU provisioning, no model weight downloads, no queue management. Write your prompt, attach your reference images if any, and get generated images back.

The skill installation is one command:

1npx skills add https://github.com/inference-sh/skills --skill nano-banana-2Once installed, your AI agents can access Nano Banana 2 capabilities through the infsh CLI. Basic text-to-image generation is straightforward:

1infsh run nano-banana-2 --prompt "A moody aerial view of a misty valley with winding road and reflective water"For more advanced workflows, the skill supports all of Nano Banana 2's capabilities. Multi-image input for composition and editing. Custom aspect ratios for specific output requirements. Resolution control from preview quality up to 4K. Google Search grounding for factually accurate generations.

The Python SDK provides programmatic access with streaming support for real-time progress tracking:

1from inference import Client23client = Client()4result = client.run("nano-banana-2", {5 "prompt": "A three-panel comic infographic comparing cloud types",6 "aspect_ratio": "16:9",7 "resolution": "high",8 "num_images": 19})This integration is particularly valuable for anyone building products that need image generation as a feature. Marketing tools that auto-generate social assets. E-commerce platforms that create product visualizations. Educational apps that produce diagrams and infographics. The API-first approach means you integrate once and your users get access to Google's most capable image model.

Where Nano Banana 2 Shines

The combination of speed, accuracy, and control makes Nano Banana 2 particularly well-suited for several workflows.

Marketing asset generation benefits from the text rendering improvements and production-ready specs. Generate mockups with actual readable copy, iterate quickly on concepts, and output assets at publication-ready resolution. The subject consistency features let you maintain brand characters across campaign materials.

Educational content creation takes advantage of the world knowledge and search grounding. Infographics that are factually accurate. Diagrams that reflect real structures. Visual explanations that combine creative illustration with grounded information. Teachers and content creators can produce materials that inform rather than mislead.

Product visualization works well with the multi-image input system. Feed in a product photo from multiple angles, a background context, and style references, then generate composite images that show the product in use. E-commerce listings, advertising, and documentation all benefit.

Rapid prototyping leverages the Flash speed. Designers can explore visual directions quickly, generating dozens of variations in the time it would take to manually sketch a few. The iteration speed changes how creative exploration happens - you can afford to try ideas that would be too slow to prototype manually.

Localization workflows use the text translation capability. Generate an image with copy in one language, then regenerate in additional languages while maintaining the visual design. Marketing teams working across regions can produce localized assets without redesigning from scratch.

Image Provenance and Identification

Google continues to advance its approach to identifying AI-generated content. Nano Banana 2 images carry SynthID watermarks - imperceptible markers embedded in the output that survive typical image processing like cropping and compression. Since launching in November 2025, the SynthID verification feature in the Gemini app has been used over 20 million times to identify Google AI-generated images, video, and audio.

The company is also implementing C2PA Content Credentials, an interoperable standard that provides metadata about how content was created. The combination of invisible watermarking (SynthID) and visible metadata (C2PA) gives users multiple ways to verify whether an image was AI-generated and by which model.

For applications where provenance matters - journalism, legal documentation, academic publishing - these verification tools provide a path to responsible use. The generated content carries its own proof of origin.

What This Means Going Forward

Nano Banana 2 represents the current state of the art for accessible image generation. The combination of Pro capabilities at Flash speeds makes high-quality AI imagery practical for workflows that could not tolerate the latency of earlier models. The search grounding feature adds a dimension of factual accuracy that purely generative models cannot match.

For developers building with image generation, the tradeoffs have shifted. You get near-Pro quality at Flash speed and cost. You can ground generations in reality when accuracy matters. You can maintain character consistency across multi-image workflows - all through a single API call.

We are excited to have Nano Banana 2 available on inference.sh. Install the skill, experiment with the capabilities, and build something interesting.

FAQ

What inputs does Nano Banana 2 accept?

Nano Banana 2 accepts text prompts alongside up to 14 reference images. The images can serve as editing targets, style references, subject references for consistency, or compositional elements to combine. You can also enable Google Search grounding to incorporate real-time web information into the generation process. Output options include multiple aspect ratios from 1:1 to 16:9, resolution scaling from 512px to 4K, and support for generating multiple images per request.

How does Nano Banana 2 compare to the original Nano Banana?

The original Nano Banana focused on image editing and iteration speed, becoming famous for its ability to modify existing images through conversation. Nano Banana Pro added advanced intelligence and studio quality but ran slower. Nano Banana 2 combines the best of both - you get near-Pro quality with world knowledge, text rendering, and subject consistency while running at Flash speed and cost. For most use cases, Nano Banana 2 replaces both previous models.

What is Google Search grounding and when should I use it?

Google Search grounding allows the model to query current web information during generation. Enable it when you need factual accuracy - generating images of real places, current events, specific products, or educational diagrams where correctness matters. Disable it for pure creative work where you want the model to imagine rather than reference reality. The toggle gives you control over whether generations should be grounded in the real world or allowed to explore freely.