ByteDance just released Seedream 5.0 Lite, and it represents a significant leap in controllable image generation. This is not an incremental update to the Seedream line - it introduces web-connected retrieval for real-time information grounding, dramatically improved instruction following, and native support for multi-image fusion workflows. The model is available today on inference.sh at $0.035 per image.

What makes Seedream 5 Lite compelling is how it handles complex creative requests. Previous image models would approximate your intent. Seedream 5 Lite actually follows it. Describe a specific composition with multiple elements, reference multiple input images, request batch generation with consistent subjects - the model delivers without the usual interpretation drift. For production workflows where precision matters, this changes what you can accomplish in a single generation call.

This post covers what Seedream 5 Lite actually delivers, the workflows it enables, and how to integrate it into your applications.

What Seedream 5 Lite Actually Is

Seedream 5.0 Lite is ByteDance's latest image generation model, released in late January 2026. It builds on the Seedream 4.x foundation but introduces capabilities that were previously unavailable in the series: web-connected retrieval for grounding generations in current information, multi-image input for complex composition workflows, and batch generation for creating coherent image sets.

The model accepts text prompts alongside up to 14 reference images. It outputs images at 2K or 3K resolution with full aspect ratio control from 1:1 to 21:9. Both text-to-image and image-to-image workflows are supported at the same price point, with streaming output available for real-time progress tracking.

The practical result is an image generator that can handle enterprise creative workflows without the back-and-forth iteration that simpler models require. Describe what you want, provide references if needed, and get outputs that match the brief.

Real-Time Knowledge Integration

The headline feature of Seedream 5 Lite is web-connected retrieval. The model can pull real-time information from the web to inform its generations, making it aware of current events, trending topics, and recently released products.

Ask for "the trending Crying Horse meme as a giant art installation on the Bund" and the model knows what that meme looks like and what Shanghai's Bund actually is. Request "the new iPhone design in a minimalist product shot" and it references current product imagery rather than hallucinating features from training data. Generate "an infographic about today's weather patterns" and it can incorporate actual meteorological information.

This grounding capability transforms what the model can produce for information-dense content. Educational materials stay factually accurate. Marketing assets reflect current trends. Product visualizations match real designs. The model becomes less of a pure imagination engine and more of a visual research assistant that can also render beautiful images.

Enhanced Instruction Following

Seedream 5 Lite dramatically improves how faithfully the model follows complex prompts. Earlier image generators would capture the general idea but miss specific details. Seedream 5 Lite adheres to nuanced instructions - camera angles, lighting setups, color palettes, spatial relationships, and compositional requirements all get respected rather than loosely interpreted.

The local editing capability deserves particular attention. Provide an image with marked regions (colored boxes or outlines) and instruct the model to modify specific areas. "Change the fruit in the blue box to grapes, the fruit in the green box to a sliced apple, and add a blueberry in the red box." The model executes each instruction in the correct location while preserving the rest of the image. This precision enables targeted edits without regenerating entire compositions.

For production workflows, this instruction fidelity means fewer generation attempts to get the output you need. The gap between what you describe and what you receive narrows substantially.

Multi-Image Input and Fusion

The multi-image input system opens workflows that were previously impractical. Feed the model up to 14 reference images and it will extract and combine visual elements from all of them intelligently.

Multi-reference blending lets you combine elements from different sources. Provide a model photo and a clothing reference, then instruct "replace the clothing in image 1 with the outfit from image 2." The model maintains the person's pose, lighting, and context while swapping the garment. Product photography, virtual try-on, and composite imagery become single-call operations.

Subject consistency maintains character or object resemblance across multiple generations. Upload character references and generate new scenes featuring those characters in different contexts, poses, and environments. The model preserves identifying features, making storyboarding, comic creation, and brand asset generation practical.

Style transfer works by providing style reference images alongside content descriptions. The model applies the visual aesthetic from your references to new subject matter, enabling consistent visual language across diverse content.

Batch Image Generation

Seedream 5 Lite supports sequential image generation - creating sets of related images in a single call. Request "generate 4 images of a courtyard corner across the four seasons" and receive four coherent images that share visual style but depict spring, summer, autumn, and winter variations.

This capability suits several workflows:

Series content like comic panels, storyboard frames, or tutorial sequences that need visual consistency across multiple images. Describe the narrative arc and receive a coordinated set.

Variation exploration for creative development. Generate multiple interpretations of a concept simultaneously, then select the direction to pursue. The batch shares enough visual DNA to feel like a coherent exploration rather than random outputs.

Brand asset sets that need to work together visually. Marketing campaigns, social media content calendars, and product launch materials can be generated as coordinated collections rather than assembled piecemeal.

The batch generation integrates with multi-image input, so you can provide reference images that inform the entire set while requesting variations across the batch.

Deeper Scenario Adaptation

ByteDance specifically optimized Seedream 5 Lite for commercial and professional scenarios. The outputs reflect this focus.

E-commerce marketing benefits from the model's understanding of product photography conventions. Request dynamic beverage shots with splashing liquid, fashion photography with Korean minimalist aesthetics, or food styling with appetizing lighting - the model knows these visual languages and produces outputs that match professional standards.

Cinematic production work comes through in portrait and scene generation. Film grain, specific lens characteristics, dramatic lighting setups, and mood-driven color grading all render accurately. The model understands terms like "Kodak Portra 400 grain" or "Dutch angle with shallow depth of field" and applies them correctly.

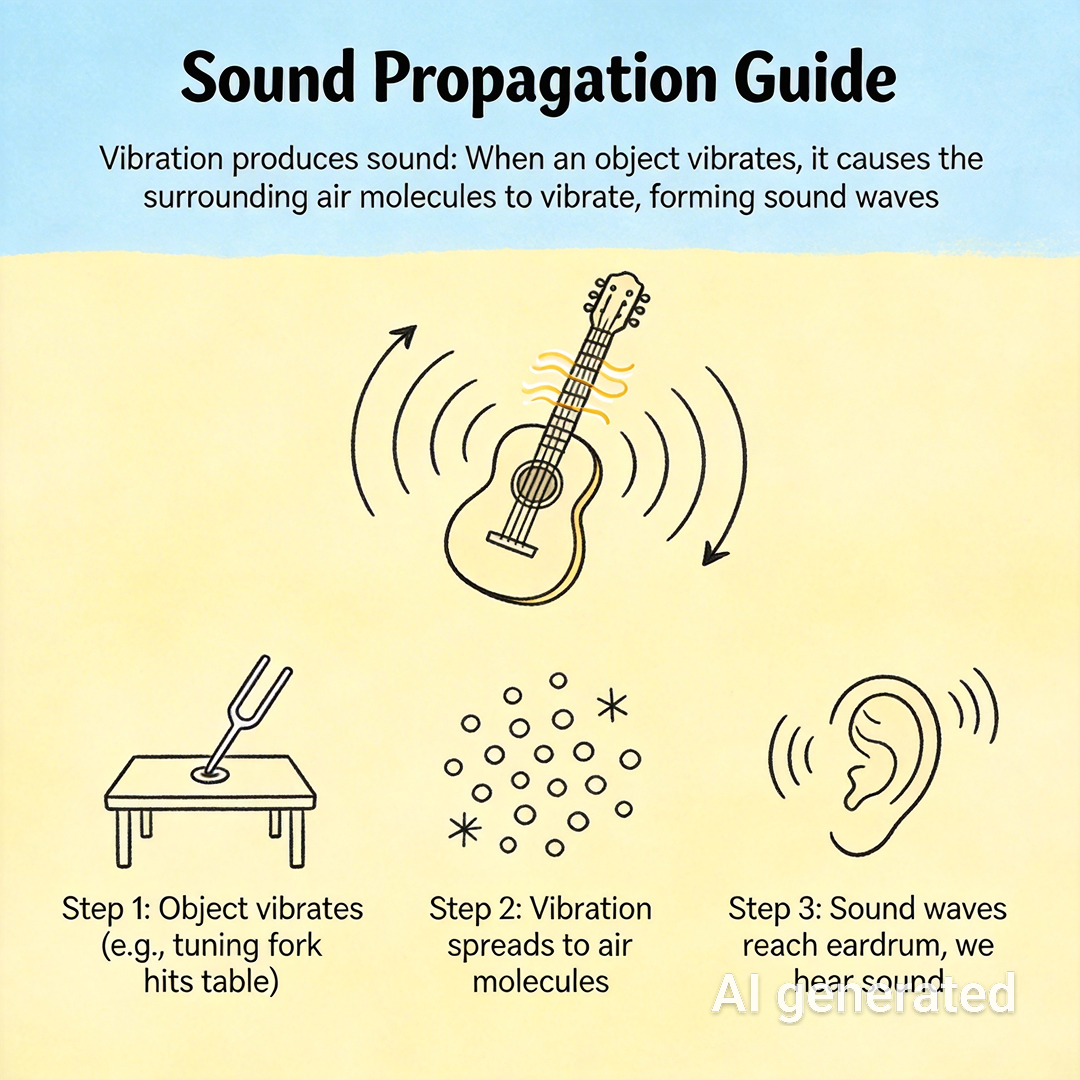

Design applications including infographics, educational diagrams, and technical illustrations benefit from the model's expanded world knowledge. Scientific concepts render accurately, visual hierarchies stay clear, and information architecture gets respected.

Using Seedream 5 Lite on inference.sh

Seedream 5 Lite is available on inference.sh as a serverless app. No GPU provisioning, no model management, no queue handling. Call the API, get images back.

Basic text-to-image generation:

1from inference import Client23client = Client()4result = client.run("bytedance/seedream-5-lite", {5 "prompt": "Vibrant close-up editorial portrait, model with piercing gaze, wearing a sculptural hat, rich color blocking, sharp focus on eyes, Vogue magazine cover aesthetic",6 "size": "2K",7 "aspect_ratio": "3:4",8 "output_format": "png"9})Image-to-image with reference:

1result = client.run("bytedance/seedream-5-lite", {2 "prompt": "Keep the model's pose unchanged. Change the clothing material from metal to transparent water. Through the liquid, the model's skin details are visible.",3 "images": ["https://example.com/reference.png"],4 "size": "2K",5 "aspect_ratio": "1:1"6})Multi-image fusion for product photography:

1result = client.run("bytedance/seedream-5-lite", {2 "prompt": "The model in Image 1 holds the lipstick from Image 2 for a product display shot. Camera focused on lipstick, professional lighting, solid color background.",3 "images": [4 "https://example.com/model.png",5 "https://example.com/product.png"6 ],7 "size": "2K",8 "aspect_ratio": "4:3"9})The API supports all aspect ratios from 1:1 to 21:9, both 2K and 3K resolutions, PNG and JPEG output formats, and optional watermarking for content identification.

Resolution and Aspect Ratio Options

Seedream 5 Lite offers precise control over output dimensions through resolution tier and aspect ratio selection:

| Aspect Ratio | 2K Dimensions | 3K Dimensions |

|---|---|---|

| 1:1 | 2048×2048 | 3072×3072 |

| 3:4 | 1728×2304 | 2592×3456 |

| 4:3 | 2304×1728 | 3456×2592 |

| 16:9 | 2848×1600 | 4096×2304 |

| 9:16 | 1600×2848 | 2304×4096 |

| 3:2 | 2496×1664 | 3744×2496 |

| 2:3 | 1664×2496 | 2496×3744 |

| 21:9 | 3136×1344 | 4704×2016 |

These preset combinations ensure optimal quality at each dimension. The 2K tier suits most web and social applications. The 3K tier provides additional resolution for print, large displays, and assets that need cropping flexibility.

Pricing

Seedream 5 Lite runs at $0.035 per generated image. This flat rate applies to both text-to-image and image-to-image workflows, regardless of resolution tier or number of input images. For batch generation, each output image in the batch counts separately.

At this price point, rapid iteration becomes economically practical. Generate dozens of variations to explore creative directions. Produce complete asset sets in single workflows. Use the model for concepting work that would previously require expensive manual exploration.

What This Means for Production Workflows

Seedream 5 Lite shifts what's practical for AI-assisted creative production. The combination of precise instruction following, multi-image fusion, batch generation, and real-time knowledge grounding covers workflows that previously required multiple tools or extensive manual intervention.

Marketing teams can generate campaign assets that actually match briefs. E-commerce operations can produce product visualizations at scale. Content creators can maintain character consistency across story sequences. Educational publishers can produce accurate infographics without manual fact-checking of visual elements.

The model does not replace creative direction - you still need to know what you want. But it dramatically reduces the gap between creative intent and generated output. Describe your vision precisely, provide relevant references, and receive images that execute on that vision.

We are excited to have Seedream 5 Lite available on inference.sh. The model represents the current state of the art for controllable, production-ready image generation.

FAQ

What inputs does Seedream 5 Lite accept?

Seedream 5 Lite accepts text prompts alongside up to 14 reference images. The images can serve as editing targets, style references, subject references for consistency, or compositional elements to combine. Output options include 2K and 3K resolutions, aspect ratios from 1:1 to 21:9, and PNG or JPEG formats.

How does Seedream 5 Lite compare to Seedream 4.5?

Seedream 4.5 offered strong image generation with 2K and 4K output. Seedream 5 Lite adds web-connected retrieval for real-time information grounding, significantly improved instruction following, and native batch generation support. The 5 Lite model focuses on 2K and 3K output with enhanced control rather than maximum resolution.

What is web-connected retrieval?

Web-connected retrieval allows the model to query current web information during generation. This enables accurate depictions of recent events, trending content, current product designs, and factual information that may not be in training data. The feature makes the model suitable for time-sensitive content where accuracy matters.

Can I maintain character consistency across multiple images?

Yes. Provide character reference images and the model will maintain identifying features across new generations. This supports storyboarding, comic creation, brand mascot assets, and any workflow requiring consistent subjects in varying contexts.